It’s 2017 and this is the year Gartner estimated more revenue will come from selling Solid State Drives (SSDs) than their slower, spinning, ancestral Hard Disk Drives (HDDs). 1 To some, this seems like a no-brainer, but many experts believe the estimate is way off as HDDs continue to improve capacity, MTBF (Mean Time Between Failure), performance, and price. As more enterprises adopt SSDs, there have been shortages in flash memory used in their construction, which has driven prices up and created supply chain delays. That’s why we’re here, to explore situations where you really need SSDs vs when something else may be good enough (or better!) for your use.

It’s 2017 and this is the year Gartner estimated more revenue will come from selling Solid State Drives (SSDs) than their slower, spinning, ancestral Hard Disk Drives (HDDs). 1 To some, this seems like a no-brainer, but many experts believe the estimate is way off as HDDs continue to improve capacity, MTBF (Mean Time Between Failure), performance, and price. As more enterprises adopt SSDs, there have been shortages in flash memory used in their construction, which has driven prices up and created supply chain delays. That’s why we’re here, to explore situations where you really need SSDs vs when something else may be good enough (or better!) for your use.

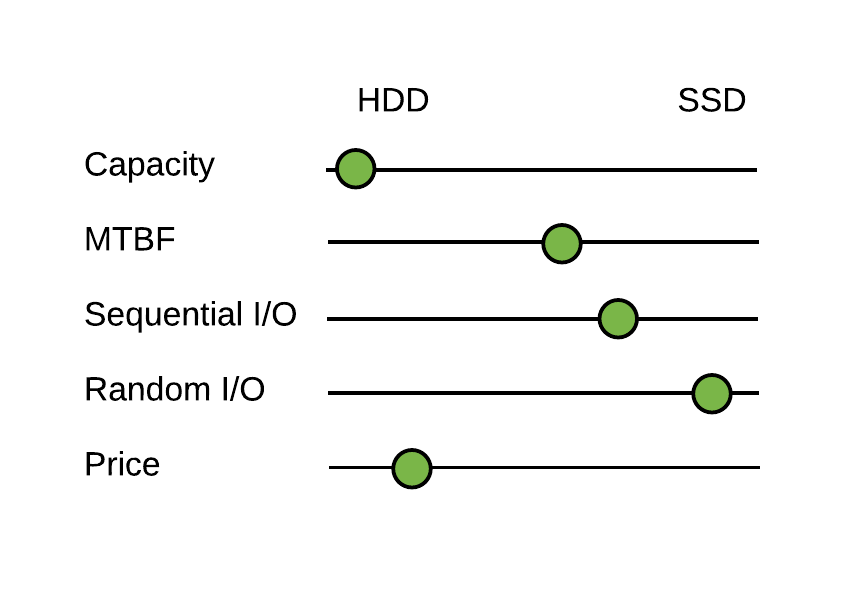

Let’s quickly review the primary differences that make up the choice: Capacity, Failure rate, Performance (both sequential and random I/O), Price and Sourcing. This is a high-level comparison only, data-center architects are often considering many more factors such as heat, power, form factor, compatibility, vendor preference, and ease of installation.

-

Capacity: HDD is currently the winner here. You can easily buy +10TB drives today and it’s estimated we could see 100TB drives by 2020. If your goal is to cram as much storage as you can into the smallest space possible, then the density of HDDs is a winner.

-

Failure Rate: Both SSDs and HDDs are making amazing improvements in reliability, with some SSDs and HDDs reporting MTBFs of >1.5M hours, we consider these equivalent. This means if you operate 167 drives for a year, you’ll expect to see an average of 1 fail.

-

Performance: Both SSDs and HDDs are great at sequential I/O throughput. SSDs are a clear winner over HDDs on random I/O and have service times that are measured in microseconds, not in milliseconds where moving parts must seek to the I/O location on each operation. If you’re primarily doing sequential I/O (like recording video or time series data), then HDDs could serve you well. However, if you’re requiring the fastest service times and optimal performance for random I/O, then you need SSDs. It’s important to understand your workload and isolate your HDDs for sequential I/O workloads, otherwise other tasks that will move the device’s write head and you’ll see performance degrade to that of the random I/O workloads.

-

Price: Price is a tricky subject – are you wanting to optimize spend over space or time? That is, do you measure “capacity” in terms of gigabytes or in transactions per second? If you’re looking at transactions per second per dollar, then you may need SSDs. However, most comparisons use $/GB metrics and with HDDs giving between 8-10x more capacity per dollar spent, I’d consider HDDs here the winner in most cases.

-

Sourcing: We’ve already seen flash shortages that disrupt the availability of SSDs making it difficult to make large purchases at times – meaning higher costs and longer lead times to get them when you need them. There are many reasons for flash shortages (material shortages, demand, etc.) and it’s difficult to predict when they’ll happen. If you’re needing SSDs, make sure you plan your sourcing process far ahead to get the devices you need when you need them! HDDs are currently easier to source, so in some urgent storage scenarios, you may not even have a choice of going with SSDs.

HDD vs SSD on Common Attributes

HDD vs SSD on Common Attributes

What’s This Mean for Customers of AMPS?

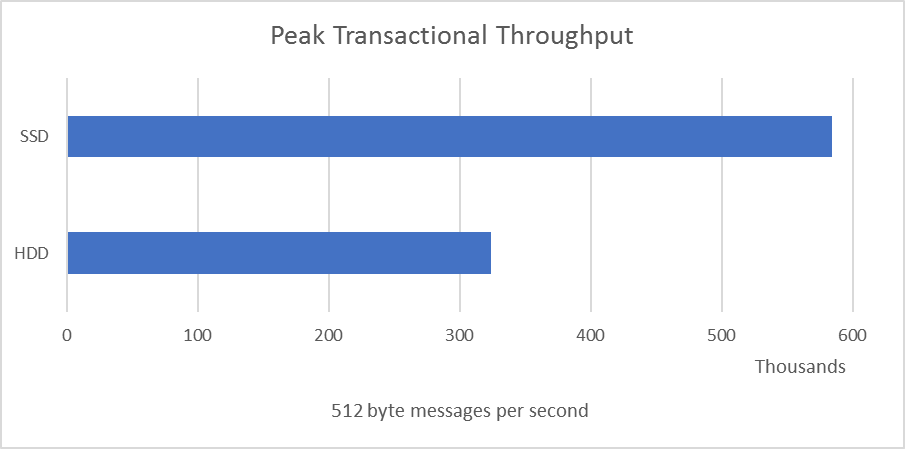

AMPS can benefit from fast storage on several dimensions. The most obvious one is transactional volume in its transaction log. Since AMPS writes to the transaction log durably (it doesn’t acknowledge it’s stored until the device has completed the write) then the throughput of the device dictates the transaction throughput of the system. For this comparison, we’re using commodity devices locally attached to AWS cloud instances and the device was completely isolated, so this was the best-case scenario for sequential write performance for these devices. (Important: Using higher-end, enterprise SSDs AMPS can do easily 5x these numbers.)

Peak throughput comparison

Peak throughput comparison

You can see that an HDD does well at 300K messages per second. There are many messaging problems where that’s easily enough capacity – however, you need to be careful to isolate the AMPS transaction log to prevent other applications or services from moving that write head. If you’re over 100-300K messages a second, you’ll want to look at an SSD. If you require more than 300K/s, you may want to start considering higher end SSD devices as well where you can achieve 2-3 million transactions per second.

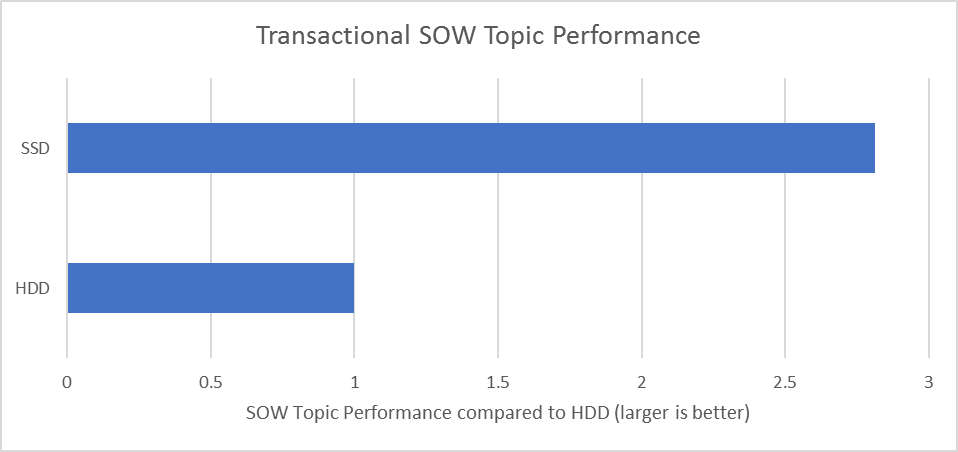

If you have a transactional State-of-the-World (SOW) topic, then you’ll have a workload that’s considered random I/O and the performance of your AMPS deployments can greatly benefit from SSDs. When AMPS updates records for transactional SOW topics it will typically update the on-disk record image in-place, which has all the characteristics of random I/O for most workloads and abysmal HDD performance. This also means that instance recovery or SOW topic rebuilds can be done much faster on SSDs.

Transactional SOW performance

Event Stream Replay Performance

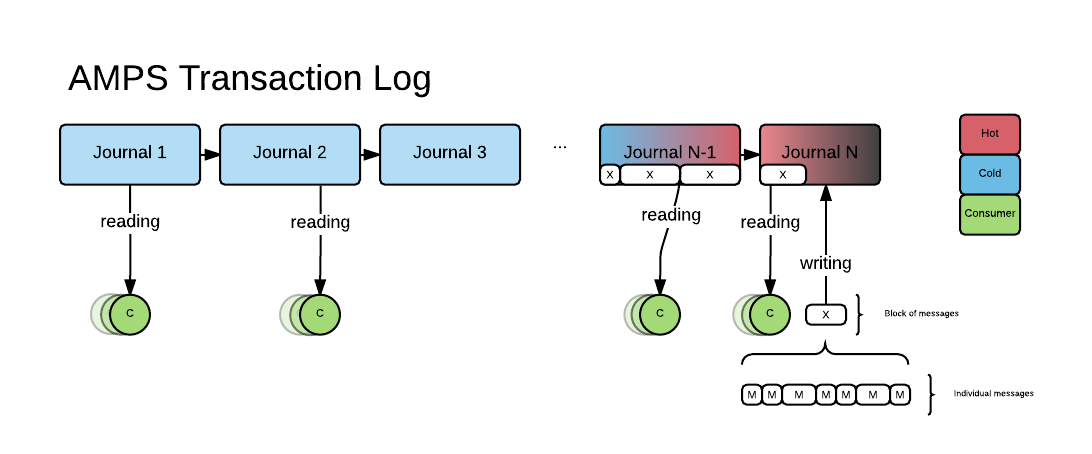

AMPS has a sophisticated transaction log replay system that allows users to replay content filtered event streams at a specific rate – for example, you could replay an event stream at 4x the real-time rate, 5MB/s, or 50,000 messages per second. Some users have 1000’s of these replay consumers at any given time, which can put tremendous stress on the storage device in environments where the total persisted event stream is much larger than the amount of memory available in the host environment.

The AMPS Transaction log is composed of individual journal files, where the latest journal file is being written to and any of the journal files could be concurrently read from. These event stream replay consumers, even though they’re individually sequential, will perform like a randomized I/O workload in aggregate. Because of the randomized I/O pattern, the write performance to the head journal will suffer in HDD environments with several concurrent replay consumers.

In the following diagram, you can see a high-level view of the AMPS transaction log, showing replay consumers reading from “cold” journals as well as the head journal being written to. We’ve designed AMPS so that cold journals can be archived to cheap-n-deep HDD (or even NAS) so that certain deployments only need SSD storage to cover their “hot” data where the data is being read and written to.

Transaction log overview

Transaction log overview

Warnings & Tips

Slow SSDs: Don’t go buying SSDs assuming any SSD will be better than any HDD, there do exist SSD devices that are far slower/worse than HDDs – check the drive specs before buying!

File System: The choice of file system can make a huge difference in performance for some work-loads, but more importantly are the mount options for the device. For example, if you’re using the popular ext4 file system, do you need to have journaling or can you turn it off for a performance benefit? Can you use noatime and nodiratime to turn off file metadata updates to update the access time for every read?

Multi-Tenant Environments: If you plan on running multiple AMPS instances on the same host or other applications alongside an AMPS instance, you’ll want to be careful to isolate the load or provide enough capacity to achieve your objectives. It’s a common error to benchmark systems independently and then deploy collectively not aware of how the resources degrade non-linearly under load.

So, Do I need an SSD or not?

TLDR; Here are some questions to help determine if you truly need an SSD for your AMPS deployment. If you answer “Yes” to any of these, then you’re a great candidate for an SSD and it’s unlikely you’ll regret paying up for the SSD device.

Will you be operating multiple I/O heavy applications on the host?

Multi-tenant environments where performance matters can benefit from SSD and minimize the disruption of one service from I/O bursts of another.

Will you be storing more than 2x the hosts available memory on the device?

When the amount in storage vastly exceeds the host’s memory, system performance can be lost to OS virtual memory paging activity.

Do you plan on making heavy use of bookmark/replay subscriptions?

Or

Do you plan on using transaction log backed SOW topics?

Or

Do you plan on using high-velocity message queues?

The features above can approximate a randomized I/O workload and can benefit from SSD devices.

Will you be publishing bursts of events or messages at more than 100MB/s?

If you’re publishing message bursts at greater than 100MB/s and you’re latency sensitive, then you’ll need an SSD. If you’re publishing bursts of more than 200MB/s, you may want to look into a higher end SSD/flash device.

Can you afford the monetary costs and sourcing lead-time?

SSDs are currently more expensive and the sourcing lead-time can be significant for some firms. If you need ample storage today, you may not have SSD as an option.

Summary

Because of the differences in performance, cost, and availability, both HDD and SSD options continue to thrive. Every quarter the HDD vendors offer more capacity and a lower price while SSD vendors are cranking up capacity and performance. If you’re even asking the question “Do I need an SSD?” and you can buy an SSD device that matches your criteria for performance, capacity, budget, and time-to-market, then you should’ve already done it.

[Updated 08 September 2017: Clarify that queues can have a disk usage pattern similar to bookmark replay.]